Cognitive Offloading and AI in Schools: What It Is, Why It Matters, and How to Choose the Right Tools

Cognitive offloading is becoming a key concern for school leaders and the Department for Education, with the use of AI in education on the rise. In fact, the DfE’s 2024 generative AI guidance states that developers must “mitigate the potential for cognitive deskilling, or long-term developmental harm to learners”.

With pupils having ready access to artificial intelligence, and educational environments seeking to harness the use of these digital technologies, the risk of AI impacting negatively on pupils’ cognitive performance is no longer theoretical; it is very real. Research supports the DfE’s concern: an independent randomised controlled trial found pupils using AI tools to assist their learning scored 57.5% on a retention test, whereas those who did not use the AI tools scored an average of 68.5%.

This article outlines the difference between cognitive offloading and cognitive outsourcing, what the research says about AI technology, the benefits and risks of using artificial intelligence in education, and how school leaders can evaluate artificial intelligence tools so they scaffold thinking and learning rather than replace it with cognitive outsourcing.

Key takeaways

- The DfE expects schools to take an active, risk-based approach to AI tools, requiring them to assess how artificial intelligence is used by pupils, to put safeguards in place, and to consider the potential impact on learning and development, including the risk that these tools reduce meaningful cognitive processes if used inappropriately.

- Pupils using AI tools scored 57.5% on a retention test, compared to 68.5% for those who did not – evidence that when cognitive offloading tips into cognitive outsourcing, learning suffers.

- The key distinction is between cognitive offloading (a form of scaffolding) and cognitive outsourcing: one replaces thinking, the other enables cognitive processes.

- Effective AI systems promote active cognitive engagement, not passive consumption of AI-generated content.

- Schools should evaluate whether tools scaffold problem solving, track cognitive tasks, and build critical thinking skills.

The Ultimate Guide to Metacognition

Everything you need to know to successfully embed metacognition across your school, Including how metacognitive strategies can help raise maths attainment, a step by step guide to teaching metacognition and 10 practical strategies for the classroom.

Download Free Now!What is cognitive offloading?

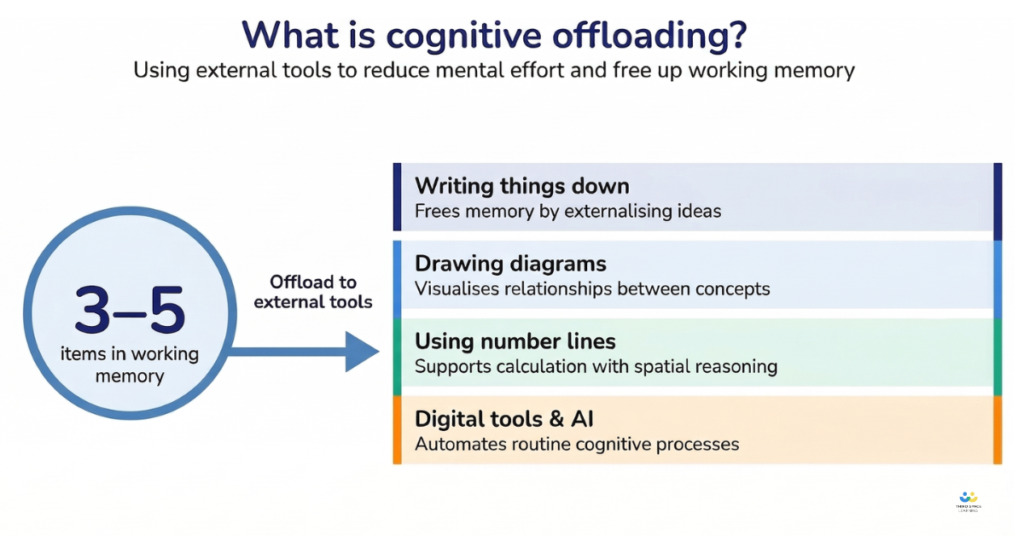

Cognitive offloading refers to the use of external tools, digital or otherwise, to reduce the mental effort required to complete a task. In the era of AI, it may be surprising to learn that humans have always resorted to cognitive offloading.

The working memory can typically hold only 3–5 items or chunks of information. Therefore, using external systems for cognitive offloading, such as writing things down, drawing diagrams and using number lines, means that limited cognitive resources can cope with complex tasks.

Cognitive psychologist Paul A. Kirschner reminds us that cognitive offloading itself is not something to worry about. For example, writing down intermediate steps in a maths problem reduces cognitive overload and allows pupils to continue thinking effectively.

The issue is not cognitive offloading itself but what happens when the use of AI tools replaces thinking altogether.

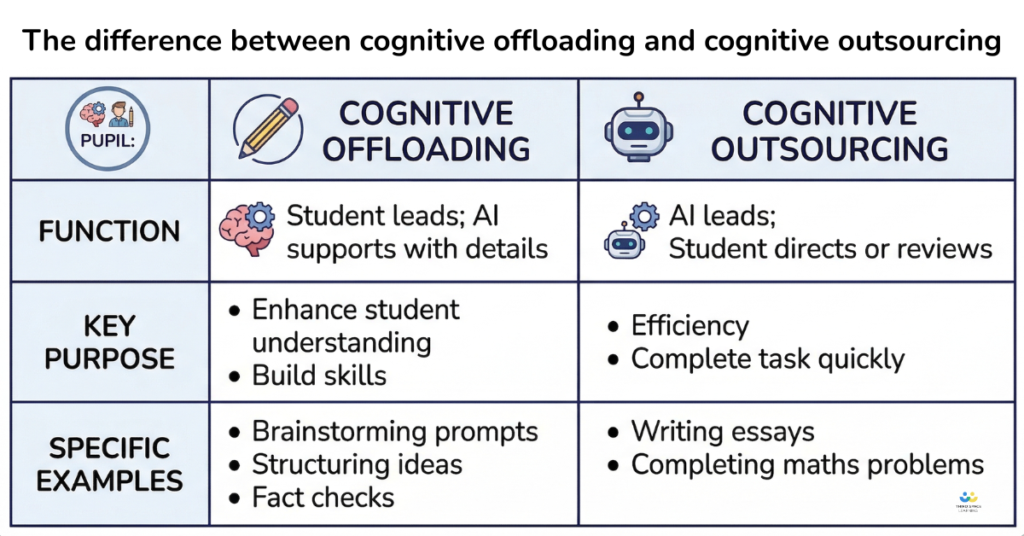

The difference between using AI for cognitive offloading and cognitive outsourcing a task

Traditional cognitive offloading supports human thinking and learning. But modern AI tools go one step further and take over the human thinking process. AI tools generate answers, explanations, problem-solve and produce extended responses with minimal human input.

When cognitive offloading relies too heavily on external systems, it becomes cognitive outsourcing. As defined by Kirschner, cognitive outsourcing is “the deliberate transfer of a function that would normally be performed internally to an external agent that performs it instead.”

It’s simple to distinguish between the two:

- Cognitive offloading: the AI tool assists the pupil in performing the cognitive tasks

- Cognitive outsourcing: the AI system performs the cognitive tasks

The rising use of generative artificial intelligence, such as ChatGPT for maths, is pushing more pupils from offloading into outsourcing. They no longer engage in the mental work of structuring ideas, recalling knowledge, or solving a problem. Pupils simply prompt and receive the solution.

In doing so, they outsource the cognitive processes that drive learning. And when those processes are removed, the long-term benefits: stronger memory, deeper understanding, improved critical thinking and independent thought, are also reduced.

Cognitive offloading or cognitive outsourcing? A simple maths example

Imagine a child solving a multi-step calculation: 38 + 29.

There are several ways they might perform tasks to find the answer:

- Calculate it mentally

- Write down some working

- Follow a structured written method

- Use a calculator

- Ask an AI system

Which of these involves cognitive offloading? Which involves outsourcing?

Method 1

The first method involves neither. The child does all the mental work, holding everything in their working memory.

Method 2 and 3

The second and third methods involve cognitive offloading. By using external aids (such as pencil and paper, or a mini whiteboard) and writing down intermediate results, the pupil reduces the strain on working memory while continuing to engage in the cognitive processes required for solving problems.

Method 4 and 5

The fourth and fifth methods move towards outsourcing. Calculator use may still involve some thinking, depending on how it is used, and can function as a form of cognitive offloading when it supports rather than replaces the underlying process.

But asking AI tools for the answer removes the need for analysis, thinking, and sustained effort. At which point, the pupil is no longer learning how to solve the problem, they are simply asking for the answer.

What the research says about AI use and pupil thinking

The evidence on memory and knowledge retention

A growing body of research all points to the same conclusion: when AI tools reduce the mental effort involved in learning, pupils retain less. Cognitive effort is not a barrier to learning; it is the mechanism through which learning happens.

An independent randomised controlled trial found that pupils using AI tools to assist their learning scored 57.5% on a retention test, compared to 68.5% for those who did not use AI. The difference is statistically significant and directly linked to reduced cognitive effort.

This aligns with what we know about learning. Effort matters, and while cognitive offloading can preserve effort, outsourcing reduces it. When pupils engage deeply in cognitive tasks, they build stronger memory and more secure knowledge.

Bjork and Bjork’s research on ‘desirable difficulties’ reinforces this point: learning is most effective when it requires effort, even when that effort feels challenging. Separately, a 2025 study by Gerlich from SBS Swiss Business School, involving 666 participants, found that increasing reliance on AI correlated with reduced critical thinking skills.

Cognitive load theory

Dan Willingham captures the principle simply: ‘we remember what we think about’. This is supported by Cognitive Load Theory (CLT), which holds that we learn by effortfully processing information in our working memory before storing it in long-term memory. If thinking is the mechanism through which learning happens, then tools which remove thinking necessarily remove learning.

The negative impact of AI and cognitive outsourcing

The negative impact is clear: more frequent use of AI tools led to increased cognitive outsourcing, which in turn reduced active participation in key cognitive processes and led to a decline in cognitive autonomy.

Research in Frontiers in Psychology reinforces this point, highlighting that cognitive abilities develop through sustained engagement in thinking and other demanding cognitive tasks – especially during adolescence.

The pattern is consistent across other studies. Findings suggest that when artificial intelligence reduces mental effort, actual learning and remembering is reduced.

Why younger pupils may be most vulnerable

In primary education, pupils are building foundational knowledge. This requires repetition, practice, and sustained cognitive engagement. Number fluency, fraction understanding and times tables all depend on repeated mental work. If AI tools are used in ways that reduce that effort, the impact is cumulative. Weak foundations in memory and knowledge lead to later difficulties in solving problems and critical thinking.

Education researcher Umberto León Domínguez points out that “intellectual capabilities essential for success in modern life need to be stimulated from an early age, especially during adolescence.”

In secondary education, the demands shift, but the principle remains. GCSE success depends on independent thinking, solving multi-step problems, and the ability to apply knowledge in unfamiliar contexts. Exams do not assess whether pupils can prompt an AI system; they assess whether pupils can think and remember.

When AI can support learning

The evidence is not one-sided. The recent Brookings report ‘A New Direction For Students In An AI World’ identifies two potential outcomes: AI can either enrich or diminish learning.

When used well, AI tools can provide adaptive assistance, responding to individual differences in pupils’ knowledge, pace, and understanding. They can offer immediate feedback on tasks, helping pupils identify errors and refine their problem-solving and decision-making strategies in real time. In some cases, AI systems can increase student engagement by making abstract ideas more accessible, breaking down complex cognitive tasks, and helping pupils who might otherwise struggle to participate fully by encouraging effective cognitive offloading.

However, the Brookings report concludes that at present, the risks outweigh the benefits, particularly in relation to pupils’ cognitive development. Artificial intelligence can aid learning when it is designed to enhance cognitive engagement – when it prompts pupils to think, to reflect, to engage in solving problems, and to process knowledge actively – but when pupils become overly reliant on AI, it replaces those cognitive processes rather than supporting them.

What the DfE expects from schools

The concern that using AI in education replaces thinking is not speculative. In the Department for Education’s Call for Evidence on artificial intelligence in education, the single most prominent concern raised by educators and experts was over-reliance on AI in schools. It is the central concern emerging from current educational practices.

The DfE guidance sets a clear expectation that schools must take a deliberate, risk-based approach to how AI tools and AI systems are used, especially by pupils. Three requirements stand out.

- Any use of artificial intelligence must be carefully assessed, with schools weighing the benefits against the risks in their specific context.

- Pupil use of artificial intelligence should only happen with appropriate safeguards in place, including supervision, filtering, and clear boundaries.

- Schools must consider the impact of reliance on AI on learning itself. The guidance explicitly highlights the need for professional judgement: technology should aid teaching and learning, not replace it, and it cannot substitute for human expertise or the teacher–pupil relationship.

In short, schools are not passive users of digital technologies but are responsible for shaping how those tools influence cognitive engagement and therefore long-term learning. The DfE’s position is not simply about safe use; it is about effective use – and that ultimately comes down to whether pupils are maintaining cognitive autonomy and still doing the thinking for themselves.

How to tell the difference between AI that offloads and AI that outsources

Thankfully, the distinction between cognitive offloading and cognitive outsourcing is so clear that there is a simple litmus test for AI tools:

Does it step in and think? Or does it keep the pupil thinking?

However, there are other questions that you can ask to help you really discern whether or not an AI system is supporting a pupil on their learning journey.

The key questions to ask about any AI tool

The following questions can help you to take a practical approach to the evaluation of AI tools. The questions centre on learning, cognitive processes, and pupil thinking, rather than on the technical aspects of any AI tool. For example, these questions could be asked in procurement conversations, annual reviews, and governor meetings.

- Does the tool provide the answer directly, or guide the pupil through the process? This is the clearest signal. Tools that default to answers increase cognitive outsourcing. Tools that guide thought processes support learning, often through cognitive offloading.

- Does it track where a pupil got stuck, or only whether they got the final answer right? If a system only reports outcomes, it is blind to the cognitive tasks that matter. Strong AI systems track the process of thinking, not just the result.

- Does it use hints and scaffolding before modelling the solution? The presence or absence of staged support tells you whether the tool is designed for cognitive engagement or convenience.

- Does it require the pupil to respond before moving on? Without active participation, there is no mental work. Tools that allow pupils to passively consume AI generated content reduce learning.

- Can the supplier point to evidence on how the product addresses cognitive deskilling risk? Any credible provider of AI tools should be able to explain how their design reduces cognitive outsourcing and supports critical thinking skills.

These questions shift the focus from features to function. Not what the technology can do, but what it makes pupils do.

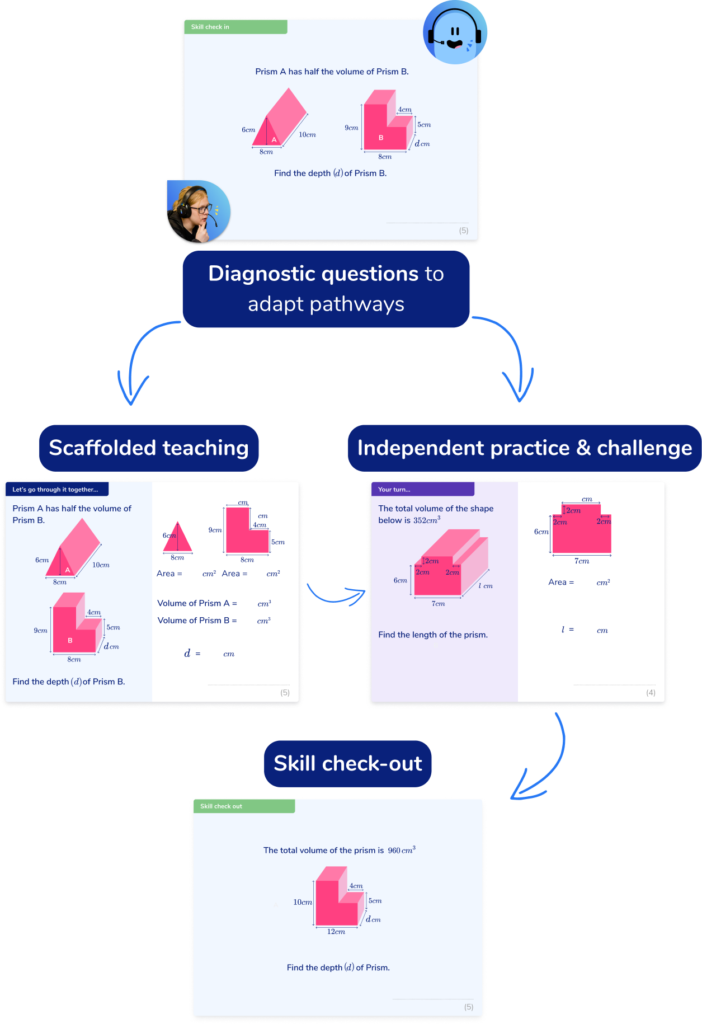

What scaffolded AI learning looks like in practice

If AI tools are to support learning, they need to follow a recognisable instructional pattern that maintains and encourages effortful thinking while helping pupils to solve problems independently.

Consider a GCSE algebra example:

Solve: 2(x + 3) = 14

A scaffolded AI system would:

- Pose the question

- Require the pupil to attempt an answer

- If incorrect, prompt: “What is the first step when expanding brackets?”

- Require a second attempt

- Provide further guidance if necessary

- Only then, present the full solution model.

At each stage, the pupil is actively thinking, and the AI tool supports this process without replacing the child’s effort. This sequence is important because it keeps the pupil engaged in the thinking processes essential for learning.

Now compare this to a different type of AI system:

The pupil inputs the question. The system immediately outputs a complete worked example. No attempt or effort is required. The task appears finished, but real learning has not occurred.

This highlights why the scaffolded approach aligns so well with a familiar teaching pattern: I do, we do, you do.

- I do: demonstrate understanding

- We do: practice with guidance

- You do: try independently

- Effective AI tools are designed to mirror this structure. They focus on fostering cognitive engagement rather than just speed.

AI tutoring with Skye

AI maths tutoring with Skye is built on exactly this model. Developed using insights from over 2.1 million one-to-one tutoring sessions, Skye delivers spoken, scaffolded lessons aligned to the national curriculum for KS2 and GCSE. When a pupil gets stuck, Skye does not answer – it provides a targeted hint based on the specific error, prompting the pupil to think again. Only after repeated attempts does it model the correct approach.

The impact of this approach is measurable. In an independent study with Educate Ventures Research, 92% of pupils successfully completed their post-session quiz, compared to 34% before the session, and 64% reported increased confidence by the end.

Because Skye’s content is created entirely by qualified teachers and maths specialists, schools can be confident that every lesson follows sound pedagogical principles – not AI-generated content. Sessions are recorded for safeguarding and available for unlimited use from £3,500 per year for primary and £5,000 for secondary.

How purpose-built AI tutoring differs from generic AI tools

Not all artificial intelligence applications are designed with learning in mind. General-purpose tools (e.g. ChatGPT, Gemini, Copilot) are technological systems optimised to provide complete answers. They are built on large language models trained on vast amounts of data. Their goal is to resolve queries quickly and effectively. That is their strength but it is also their limitation in an educational context.

A purpose-built tutoring AI tool has a different goal as it is designed to leave the pupil more capable than when they started. It aims to build knowledge, develop critical thinking skills, and improve independent problem-solving and decision-making.

The difference is subtle in design, but significant in impact:

- General AI resolves the task (cognitive outsourcing)

- AI Tutoring develops the learner’s ability

These produce fundamentally different interactions: with tutoring AI systems, the pupil remains active. The system structures the thinking, prompts analysis, and requires sustained reflective thought.

Skye, Third Space Learning’s AI maths tutor, is built around this principle. When a pupil answers incorrectly, it does not immediately provide the solution. It provides a targeted hint. It keeps the pupil engaged in the process of thinking and overcoming the problems they encounter. Only after repeated incorrect attempts does it model the correct approach.

This structure is based on over 2.1 million one-to-one tutoring sessions and follows the I do, we do, you do approach. It keeps learners engaged in the cognitive processes that underpin learning, not just completing tasks, but understanding them.

Practical strategies for keeping pupils cognitively active alongside AI

If schools are using AI tools, the goal is not to remove them but to structure their use so that thinking remains central. Practically, school leaders and teachers can focus their efforts on careful task design, educating pupils about the positives and negatives of AI usage and reviewing how AI is used in school.

1. Design tasks that require pupils to think, not just prompt

Teachers must be careful to design tasks that require pupils to engage in cognitive processes. For example, tasks should:

- Resist outsourcing: Some tasks naturally limit cognitive outsourcing. Problems requiring diagrams, reference to prior working, or peer discussion are harder for artificial intelligence applications to complete independently. These keep pupils engaged in thinking.

- Require explanations: If a pupil has used AI to get an answer, they will struggle to explain how the answer was derived. Tasks focused on being able to talk about what pupils know and can do won’t benefit from an AI-derived answer.

- Be process-focused: Pupils naturally want to find an answer, but tasks and feedback should centre on how they get to an answer. Require pupils to show their working and base feedback on the quality of their working processes.

- Include variability and transfer: Tasks should require pupils to apply their knowledge in slightly different or unfamiliar contexts, rather than repeating the same structure. This makes it harder to rely on AI-generated responses and instead requires pupils to adapt, connect ideas and think flexibly.

Each of these maintains cognitive engagement by keeping AI use within cognitive offloading rather than outsourcing. Each ensures that AI tools support learning, rather than replacing it.

2. Talk to pupils about AI dependence

Heavy reliance on AI is not primarily a behaviour issue; it is a metacognitive one. People tend to underestimate how much effortful thinking is required to develop cognitive abilities and retain knowledge. Pupils need to learn how their own memory works: that thinking – effortful, sometimes uncomfortable thinking is what makes learning stick.

Using AI to get the answer is like watching someone else train in the gym. You see the movements. You know what is happening. But you do not build your own strength.

The same is true of cognitive processes. Without mental work, there is no development of knowledge, no strengthening of memory, no improvement in critical thinking.

Schools that build this understanding into their approach to AI technology – not just through policy, but through everyday classroom practice – are better placed to develop independent learners. For secondary pupils, the link to exams is direct. In GCSEs, there is no artificial intelligence available to help them. Pupils must rely on their own thinking, their own ability to problem solve and their own knowledge: the cognitive muscle needs to be built in lessons.

3. Reviewing AI use in your school: what to look for

For school leaders and subject leads, the key question is simple: What are pupils doing when they use AI tools?

There are practical signals to look for:

- Are pupils using AI tools to generate answers, or to check their own work?

- Can pupils explain the reasoning behind AI-supported responses?

- Are pupils engaging in sustained thinking, or moving quickly between tasks with minimal mental effort?

- Does the school’s approach distinguish between different types of AI systems?

For AI tutoring specifically:

- Does the tool report on where pupils need support within the process of solving problems?

- Can teachers see the sequence of hints, attempts, and corrections and how the pathway has been scaffolded?

- Does the system prioritise cognitive engagement or completion?

These are not technical metrics. They are indicators of learning.

Technology evolves quickly, and patterns of tool use change. Schools should make AI review a standing item in department meetings or curriculum discussions.

Engaging in cognitive processes

The question facing schools is not whether to use artificial intelligence but how to ensure pupils are still engaging in the necessary cognitive processes when using AI.

If AI-driven digital tools reduce mental effort, then they will reduce learning. However, if they are designed and used to support problem solving, sustain thinking, and strengthen cognitive processes, they can play a valuable role.

The next step is a practical one: take one AI tool currently used in your school. Apply the questions in this framework and ask, honestly: Is this tool supporting learning or replacing it?

Cognitive offloading FAQs

No. Cognitive offloading can support thinking by reducing unnecessary load. The issue is when it becomes outsourcing: when AI systems replace the cognitive processes that drive learning.

Traditional external tools support specific tasks. Modern AI-driven digital tools can complete entire problem-solving and decision-making processes, which has a much greater impact on thinking and learning.

It depends on the design. Tools that require active participation, sustained cognitive effort, and structured problem-solving reduce the risk.

Look for AI systems that prioritise thinking, require pupil input, provide scaffolded support, and build critical thinking skills, ones that aren’t just tools that deliver answers quickly.

DO YOU HAVE STUDENTS WHO NEED MORE SUPPORT IN MATHS?

Skye – our AI maths tutor built by teachers – gives students personalised one-to-one lessons that address learning gaps and build confidence.

Since 2013 we’ve taught over 2 million hours of maths lessons to more than 170,000 students to help them become fluent, able mathematicians.

Explore our AI maths tutoring or find out about maths intervention programmes for your school.