What is the current evidence into AI tutoring and the impact on learners in school?

Is AI tutoring a transformative way to scale up one to one support in school? The government thinks so. But the truth is, up until very recently, there’s been little evidence specifically around AI tutoring. As you’d expect this is changing fast and research is now under way into the efficacy of different models of AI tutoring.

Here we look at what we already know from the research about effective tutoring, and to what degree that is now being replicated and evidenced in AI tutoring.

Key takeaways

- One to one tutoring is one of the most effective interventions available to schools – the challenge has always been delivering it at scale and at an affordable cost.

- The research on what makes tutoring effective is clear: high-dosage, curriculum-aligned, conversational programmes with human oversight produce the strongest gains.

- Generic AI tools used for studying can harm learning – one RCT found students using ChatGPT performed around 17% worse in exams.

- Purpose-built AI tutoring systems designed around proven tutoring principles are showing measurable within-session learning gains.

- The DfE’s generative AI safety standards and the research evidence now point in the same direction – progressive disclosure, human oversight, and curriculum alignment are both regulatory requirements and markers of effective design.

- School leaders should evaluate any AI tutoring system against five practical questions before adopting.

AI Tutoring in Schools: A Practical Guide to Improving Results Within Your Existing Budget

Use this clear step-by-step roadmap built on evidence-based impact to decide whether AI tutoring is right for your school.

Download Free Now!The DfE’s stance on AI tutoring

In 2025, the Department for Education announced plans to trial AI tutoring tools with up to 450,000 disadvantaged students by 2027. This is one of the most significant EdTech commitments any government has made. The ambition is to close the achievement gap for students from disadvantaged backgrounds who cannot access private tutors.

The evidence base on which this decision was made is clear – one to one tutoring is one of the most effective and well tested methods at closing the achievement gap for students. Until now, however, the challenge has been implementing one to one tutoring at scale and at an affordable cost. Using AI tutoring instead of traditional tutors makes this a real possibility.

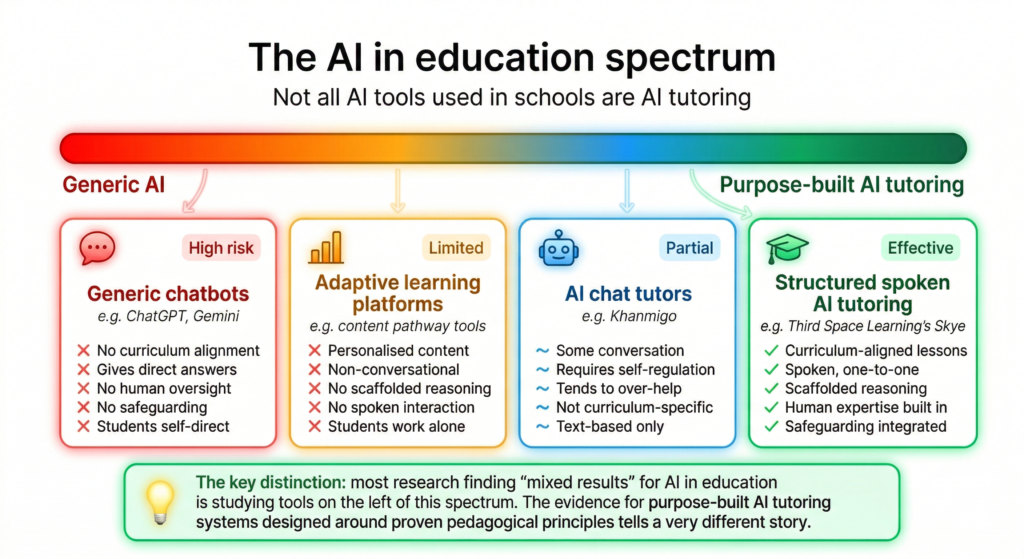

Defining AI tutoring

One of the issues we have when looking at AI tutoring evidence is that the term currently embraces a few different forms of AI assisted instruction, from homework help chatbot type tutoring to adaptive learning systems that personalise the content delivered based on assessment of learning needs. These artificial intelligence tools use generative AI to support students who, crucially, have high self regulation and are seeking the help to improve.

Our approach at Third Space Learning has been slightly different. We define AI tutoring very simply as seeking to replicate as closely as possible all those elements that have made traditional tutoring so effective at closing the attainment gap for all students, particularly those who have struggled with maths or those who are less likely to find their own routes to improvement.

We know what makes traditional tutoring effective. Our challenge as educators is to create an AI tutoring model built on the same principles and best practices.

What makes traditional tutoring effective

The evidence on what makes tutoring effective is well established, and it is the foundation for evaluating any AI tutoring system.

The EEF identifies one-to-one and small-group tutoring as one of the most effective interventions available to schools, with an average impact of five additional months’ progress. The characteristics that drive those gains are consistent across the research:

- High-dosage programmes

- One-to-one delivery

- Curriculum alignment

- Human oversight throughout

A 2026 review by Vanacore, Baker et al. examined what specifically makes human tutoring so effective. Their conclusion: it is the quality of the conversation. Effective tutors scaffold reasoning rather than explain answers. They sustain motivation through dialogue, adjust in real time and build the kind of trust that keeps students willing to struggle productively. Tutoring works because of what happens in the conversation, not simply because a student receives individual attention.

There is also a practical constraint that the research addresses directly. Human tutoring cannot scale without quality dropping. As programmes grow, impact tends to diminish – finding, training and retaining enough high-quality human teachers is the binding constraint.

The promise of AI tutoring is not that it replaces human tutoring, but that it may be able to deliver the conversational, structured, curriculum-aligned support the evidence calls for – at a scale human programmes have not been able to sustain.

What effective AI tutoring needs to look like

Given what the evidence tells us about effective tutoring, the requirements for AI tutoring become clear. An AI tutoring system needs to do more than deliver personalised content. It needs to hold a conversation.

Adaptive learning platforms have personalised content pathways for years. But they are fundamentally non-conversational – they lack the reasoning and feedback loop that makes human tutoring effective (Vanacore et al., 2026). A student working through an adaptive platform is receiving individualised instruction, but they are not being tutored.

On-demand chat tools present a different problem. They can converse, but they require students to self-regulate – to know what to ask, when to push deeper, and when to stop. Research shows that large language models tend toward over-helpfulness, reducing the metacognitive effort that produces actual learning (Vanacore et al., 2026). A tool that shortcuts the learning process is working against students, not with them.

Effective AI tutoring systems need to meet the same bar as effective human tutoring. They should be conversational, structured, curriculum-aligned, and built around human expertise. They need to scaffold reasoning rather than supply answers, sustain productive struggle rather than shortcut it, and keep teachers at the centre of the design – not as a bolt-on, but as the starting point.

This is the challenge schools face right now: finding an AI tutoring model that meets these criteria in practice, not just in principle. Most tools on the market were not designed around the tutoring evidence base – they were designed around what artificial intelligence can do, and then positioned as tutoring afterwards.

AI tutoring at Third Space Learning

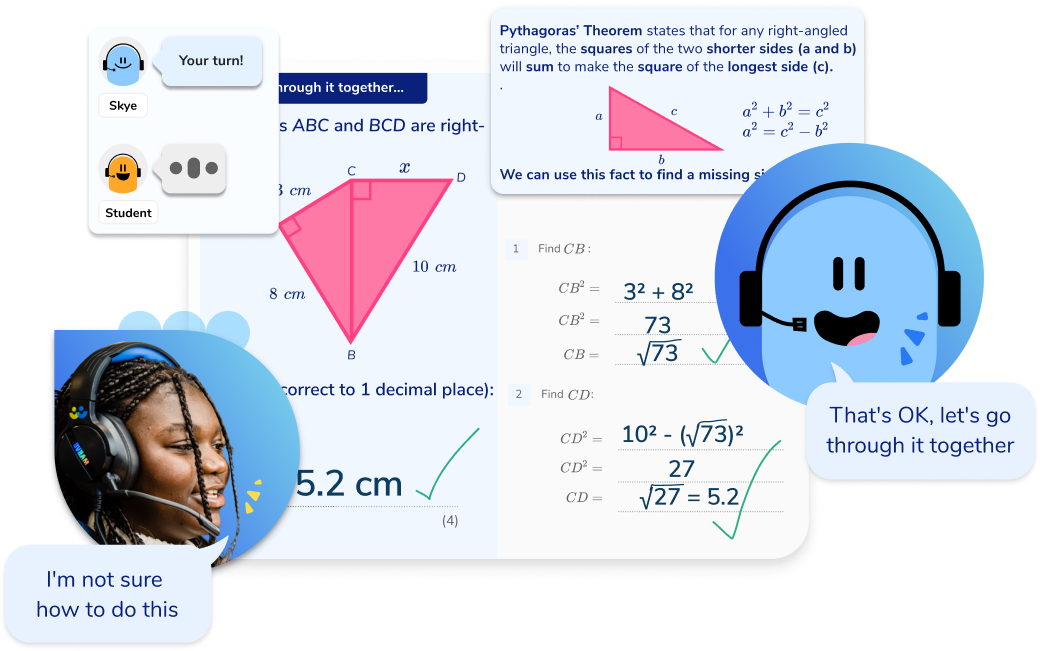

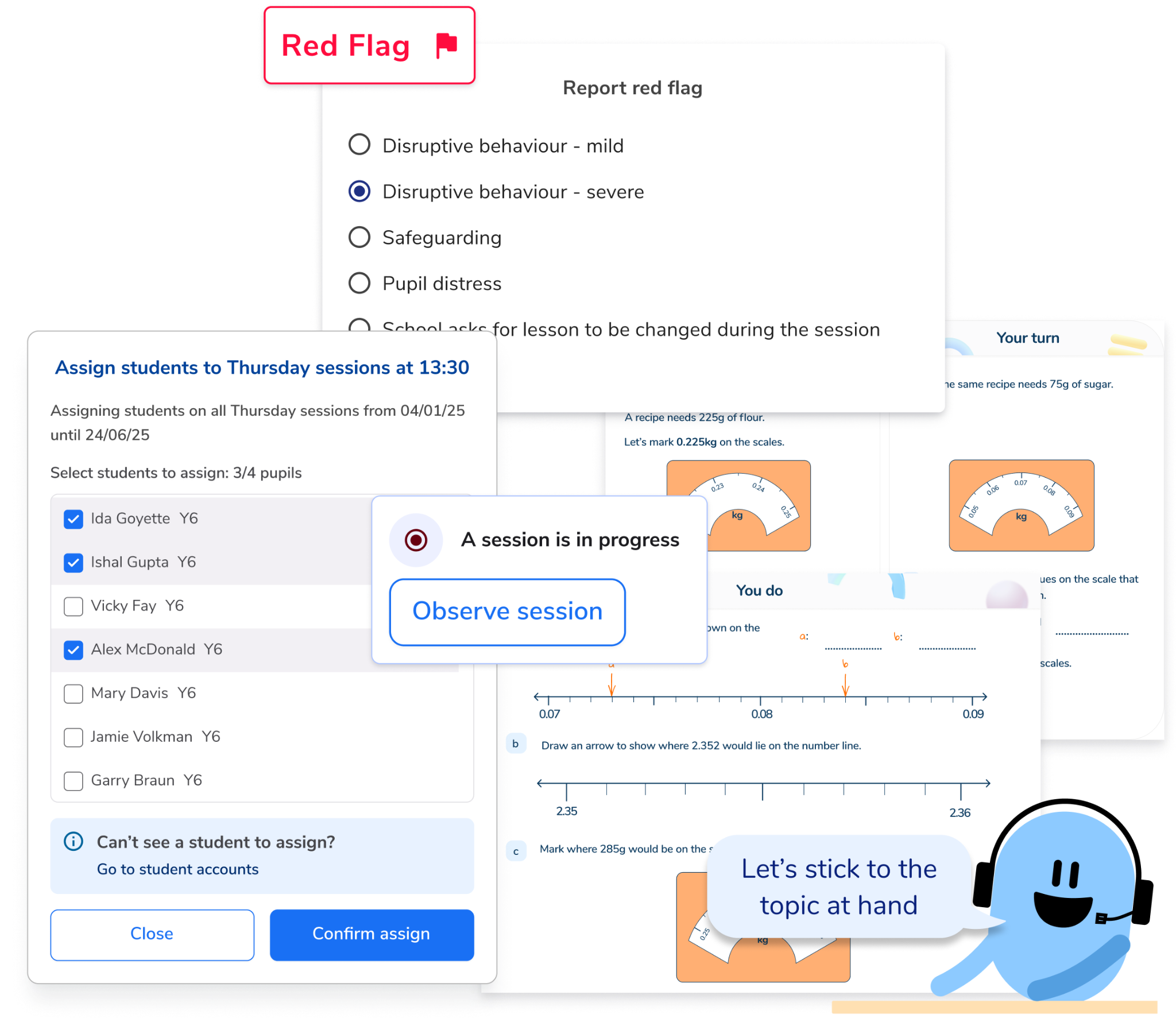

At Third Space Learning, this is what Skye is designed to do. Spoken AI tutoring sessions use teacher-written lessons aligned to the national curriculum. Skye speaks one-to-one with students, guiding them through their thinking using conversation, verbal hints and scaffolded steps – rather than providing step by step solutions that remove the need to think.

Where the AI tutoring research stands

The one to one tutoring evidence is settled. The AI-specific evidence is not, and school leaders need to understand why.

Much of the scepticism around AI tutoring stems from studies examining generic large language models in educational settings, not purpose-built tutoring systems designed around the principles the evidence calls for. There are many different approaches and interpretations of AI tutoring, and currently, there has been little academic research into the kind of structured, school-led model we have been describing.

Most studies look at students using generative AI in self-directed settings, often with no deliberate curriculum alignment. These are much more likely to be generic AI tools used in an educational context – not tutoring systems designed to produce learning gains.

The research that does exist, however, is beginning to point in a consistent direction: when AI tutoring is designed around the same principles that make human tutoring effective, it can produce measurable learning gains.

Why the evidence on AI tutoring is still developing

Rigorous peer-reviewed academic research typically takes around 18 months from design to publication. In a field moving as fast as generative AI, most research is already outdated by the time it reaches schools. That alone explains why emerging evidence on AI tutoring remains thin, but the quality of the research that does exist is an equally significant problem.

Most studies on AI and student learning are methodologically weak. Many allow students to use the AI tool in the final assessment itself, which tells you nothing meaningful about whether real learning gains have taken place. Measuring student performance using the same tool used to study is not evidence of learning – most students who appear to perform well under these conditions are demonstrating task completion, not genuine understanding or critical thinking.

Where AI in education falls short

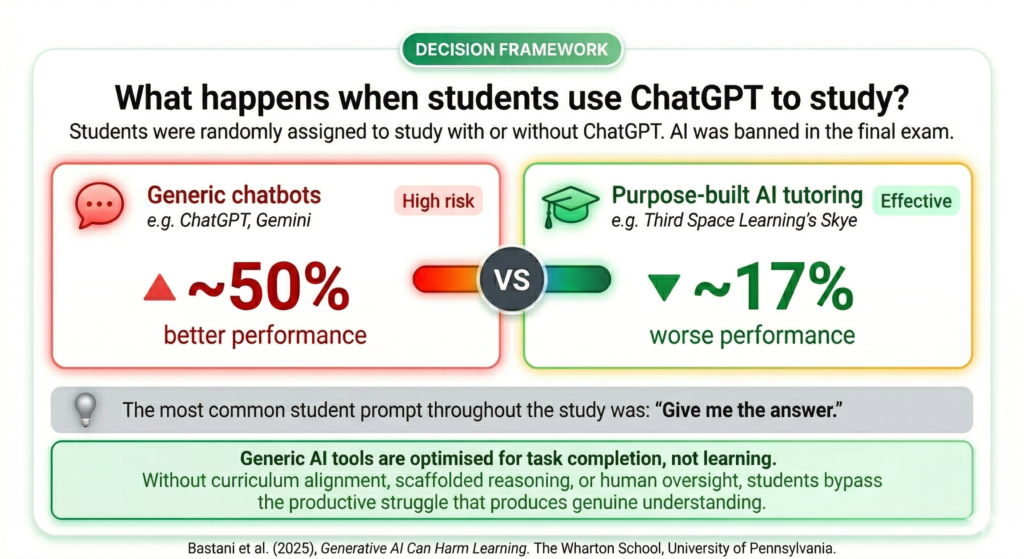

Bastani et al. (2025) conducted one of the first well-designed randomised controlled trials (RCT) in this area. They randomly assigned students to use ChatGPT to study. It banned AI in the final exam to mirror real-world conditions. The control group studied without AI throughout.

Students who used ChatGPT during study performed ~50% better in practice sessions, but ~17% worse in the exam. The most common student prompt used throughout was “Give me the answer.”

This is the core problem with generic generative AI in education. Generative AI systems are optimised for task completion rather than to teach or improve student outcomes. Unlike well-designed AI tutoring systems, generative AI does not build retrieval practice into the learning process or promote learning through productive struggle.

Even purpose-built AI tutoring tools are not immune. A qualitative evaluation of Khanmigo, Khan Academy’s AI tutor, found it did not tailor tasks to individual students’ needs or ability, and did not support metacognitive learning skills (Vanacore et al., 2026). Being designed for education is not the same as being designed around the evidence on what makes tutoring effective.

So, when looking at the evidence, the question for school leaders is not whether AI works in education systems but rather whether a specific AI tutor has been designed to produce positive student outcomes and then, what independent evidence exists to show this.

Where carefully designed AI tutoring systems show promise

Not all the evidence points to failure. When AI tutoring is built with careful engineering and grounded in proven pedagogical best practices, the results look very different.

Kestin et al. (2025) published one of the strongest randomised controlled trials to date, comparing a carefully designed AI tutor with active learning in an introductory physics course for college students. Students were assigned to either the AI tutoring system or a flipped classroom with experienced course staff facilitating group instruction. Both groups covered the same introductory material over an entire course.

The AI tutor produced median learning gains more than double those of the active learning control group, with effect sizes ranging from 0.73 to 1.3 standard deviations – among the largest ever recorded in higher education research. Students in the AI tutoring condition also showed higher student performance on follow-up questions requiring advanced problem solving.

What matters is not that AI outperformed a classroom – it is that this tutoring system was built to facilitate active engagement, foster advanced cognitive skills and manage cognitive load rather than optimise for task completion. The underlying technology mattered less than the pedagogical design.

This study (published under a creative commons licence) carries important caveats. The context was higher education, not the primary and secondary education systems where most schools are making decisions. The learning experience for college students in a physics course is very different from the learning experience of a Year 5 student in a structured maths session.

Whether these results transfer to school settings with younger students remains to be seen. Early evidence from school-based tutoring systems, including the Educate Ventures Research evaluation below, suggests the same design principles hold – but successful implementation in schools demands additional safeguards and closer integration with human teachers.

Cognitive outsourcing and the case for purposeful design

The distinction between generic AI and purpose-built AI tutoring is not just theoretical.

Oakley and Sejnowski’s Memory Paradox (2025) identifies what they call cognitive outsourcing; when AI thinks for students, no learning takes place. Productive struggle is where active learning lives, and an AI tutor that uses hints, corrective probes and scaffolded steps to manage cognitive load is a fundamentally different product from one that simply provides direct answers.

Two studies in particular show what this looks like in practice.

Eedi and Google DeepMind RCT (2025)

One of the most significant recent contributions to the AI tutoring evidence base comes from the Eedi and Google DeepMind randomised controlled trial (November 2025). LearnLM, their carefully designed AI tutor, used a Socratic approach, prompting students to identify their own mistakes and explain their thinking in their own words rather than correcting them directly.

The Eedi and DeepMind study is a valuable contribution to the emerging evidence base. However, the model tested does not yet reflect the established evidence on effective, high-impact one-to-one human tutoring as identified by the EEF – specifically the importance of spoken, one-to-one, curriculum-aligned programmes. Human tutors supervised every AI response and had the final say on every message.

While they approved 82.3% of suggestions with no or minimal edits, this level of human oversight in real time is not scalable. For AI tutoring to reach the students who need it most, it must be able to deliver effective, structured sessions without requiring a human tutor to approve every interaction.

The thread running through both studies is consistent:

- Human oversight,

- Curriculum-grounded design,

- AI tutoring systems built to encourage active learning rather than passive instruction.

These are not incidental features; they are what separates AI tutoring that produces improved student outcomes from AI that produces the appearance of them.

For school leaders evaluating AI tutoring, the research offers practical questions:

- Does the AI tutor guide students or answer for them?

- Is human expertise built into the design?

- Is the AI tutoring model scalable?

Evidence of within-session learning gains: Educate Ventures Research evaluation of Skye

The most credible AI tutoring evidence is independent evidence, not vendor-produced data.

For our own AI maths tutor Skye, we have had an independent evaluation from Professor Rose Luckin’s Educate Ventures Research – the kind of rigorous third-party scrutiny into AI tutoring the EEF calls for. The evaluation analysed 9,320 student sessions – representing substantial engagement across the programme – alongside interviews with six primary school teachers across varied implementation models, and a student survey.

What the data shows

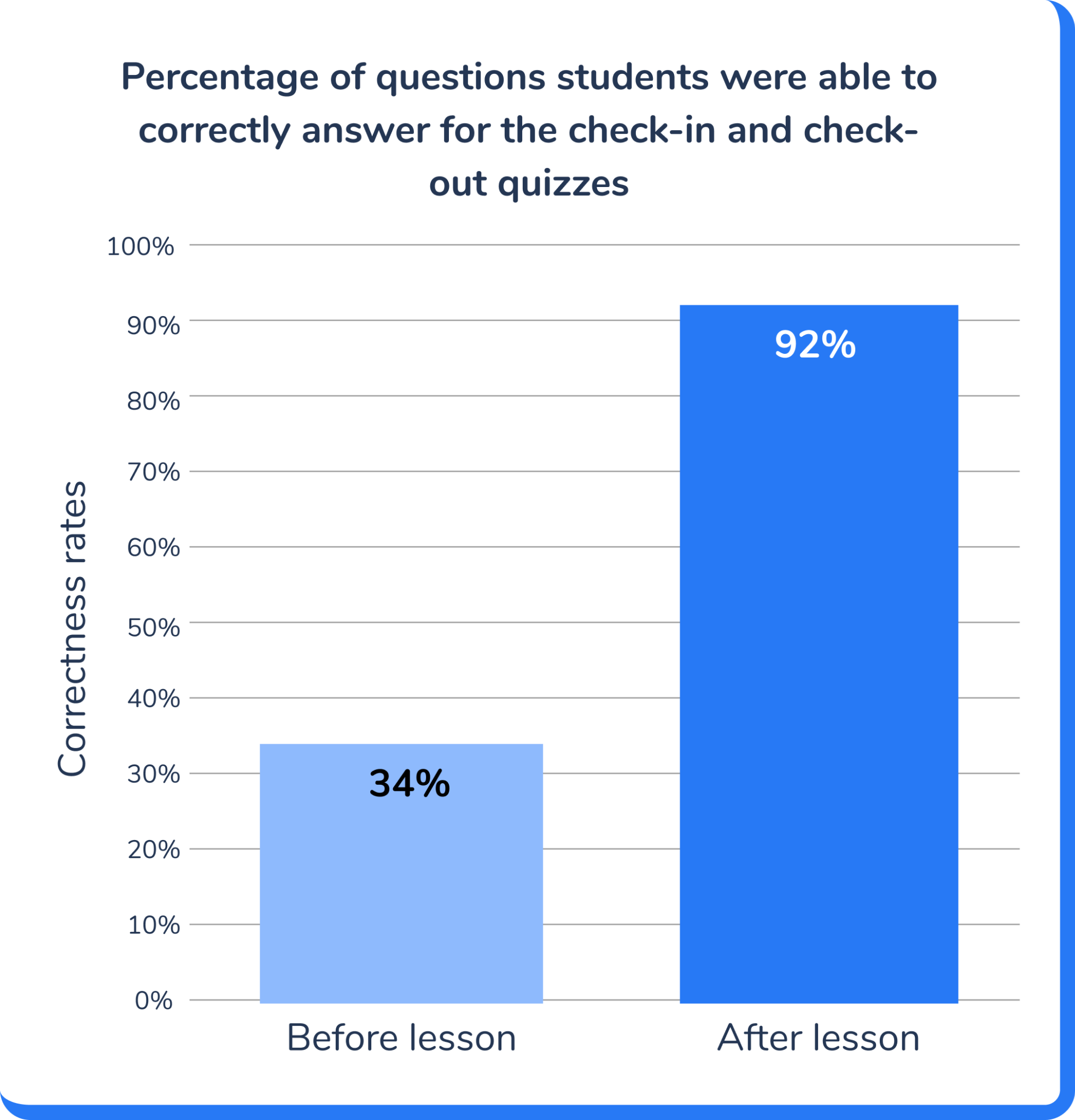

Educate Ventures Research found individual students improved from 34% accuracy on diagnostic check-in questions to 92% on check-out assessments within a single session. A substantial within-session learning gain. Educate Ventures Research is clear that this is not yet an estimate of standardised attainment impact – that would require a controlled design. What it demonstrates is strong, consistent within-session learning gains across a large dataset.

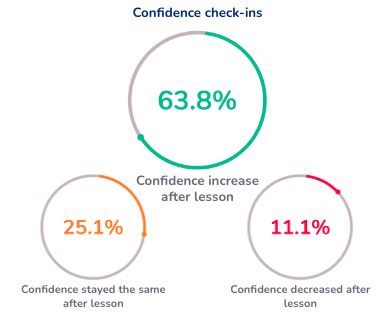

Confidence data reinforced this picture. 63.8% of students’ confidence outcomes ended higher than they began, with only 11.1% showing a decrease. Tracking the same students over time, roughly 66% showed session-on-session confidence increases.

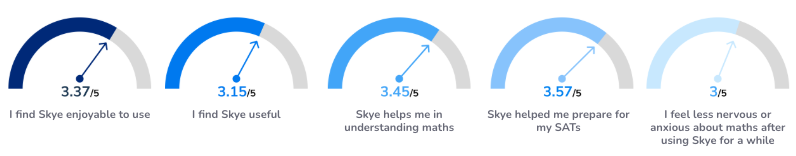

Students also rated the learning experience with AI maths tutor Skye positively across multiple dimensions:

- Helpful for understanding maths (3.45/5)

- Enjoyable to use (3.37/5)

- Helpful in preparing for SATs (3.57/5)

Who benefits most from AI tutoring?

Educate Ventures noted a particular benefit for struggling students and anxious or quieter students who hesitate to ask for help in class. But, the benefit extends beyond the most vulnerable – high-achieving learners also show improved confidence, particularly when working on challenging material they would not ordinarily ask for help with.

Educate observed that correction through AI maths tutoring felt less judgemental, making students more willing to engage, while keeping the relationship with the classroom teacher intact. School leaders interviewed in the study described Skye as a “force-multiplier for practice” and a “launchpad” for consolidation after human teaching.

Where the impact of AI tutoring still needs to be proved

The evaluation is honest about implementation challenges. EAL students and students with speech recognition difficulties face accessibility challenges, and students with behavioural needs require closer adult presence. Standardised attainment impact requires a controlled design.

What it demonstrates is strong, triangulated evidence of within-session learning gains.

What the DfE expects from AI tutoring

The DfE’s Generative AI: Product Safety Expectations and its Generative AI in Education policy paper (both updated June 2025) give school leaders a clear framework for evaluating any AI tutor. Crucially, the safety standards and the research evidence are pointing in exactly the same direction.

Student-facing generative AI tools must not provide direct answers or full solutions by default. Progressive disclosure, hints and prompts that require students to attempt the problem first are the standard expectation. Precisely the active learning strategies that research shows are most effective at supporting critical thinking and genuine understanding in educational settings.

This is where one to one AI tutoring has the potential to transform education for students who are falling behind, adapting to each student’s pace and prior knowledge in a way that whole-class or group instruction cannot.

Human expertise remains non-negotiable. The DfE is explicit that “transparency and human oversight are essential to ensure AI systems assist, but do not replace, human decision-making.” A carefully designed AI tutor that keeps human teaching at its centre is not just good pedagogy; it is a compliance requirement.

Safeguarding is a statutory duty. AI tutoring must detect signs of student distress and follow appropriate pathways under KCSIE 2025 as a legal obligation. Data protection, age-appropriateness and curriculum alignment are equally explicit requirements for any AI tool used with students.

The practical implication for school leaders is straightforward. The behaviours the DfE’s regulations mandate – progressive disclosure, human oversight, curriculum alignment, safeguarding – are the same behaviours the evidence identifies as effective. An AI tutor that meets the compliance bar is, by design, one that is more likely to produce genuine learning gains.

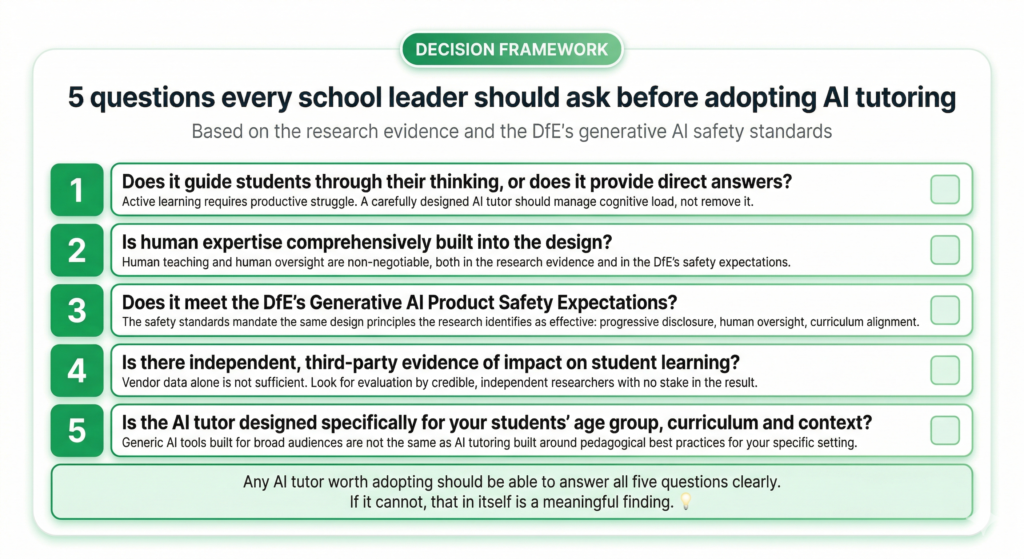

5 questions every school leader should ask before adopting AI tutoring

The research evidence and the DfE’s generative AI safety standards are now aligned. For school and MAT leaders evaluating AI powered tutoring, this makes the decision framework clearer than it has ever been.

No matter which AI tutoring you are looking at implementing in your school, ask these five questions first:

- Does it guide students through their thinking, or does it provide direct answers?

Active learning requires productive struggle – a carefully designed AI tutor should manage cognitive load, not remove it. The underlying technology matters less than whether the AI tutor is designed to promote learning through active engagement. - Is human expertise comprehensively built into the design?

Human teaching and human oversight are non-negotiable, both in the research evidence and in the DfE’s own safety expectations. - Does it meet the DfE’s Generative AI Product Safety Expectations?

The safety standards mandate the same design principles the research identifies as effective – progressive disclosure, human oversight, and curriculum alignment. - Is there independent, third-party evidence of impact on student learning and student outcomes?

Vendor data alone is not sufficient. Look for evaluation by credible, independent researchers with no stake in the result. - Is the AI tutor designed specifically for your students’ age group, curriculum and educational settings?

Generic AI tools built for broad audiences are not the same as AI tutoring built around pedagogical best practices for your specific context and curriculum.

Any AI tutor worth adopting should be able to answer all five questions clearly. If it cannot, that in itself is a meaningful finding.

The verdict on AI tutoring: what the evidence actually says

The government’s ambition is real, and the need is urgent. According to the DfE, only 25.6% of disadvantaged students achieved a strong pass in English and maths at GCSE in 2024–25, compared to 52.8% of non-disadvantaged peers. That achievement gap cannot wait, and AI tutoring could be part of closing it – but only if the AI tutoring schools adopt are built on evidence, not promise.

Where AI tutoring has been built to guide students, manage cognitive load and keep human expertise at the centre, the emerging evidence shows real improvements in student learning and improved learning outcomes. Where it hasn’t, the evidence is equally clear – students do worse.

The path forward is not about whether the technology is ready. It is about whether the tutoring systems schools adopt have been designed with the same rigour the evidence demands. AI has the potential to make high quality one to one tutoring available to all students who need it. But only if we build these tutoring systems on evidence, not hype.

AI tutoring FAQs

It’s OK to use AI as a tutor when it is carefully designed around proven pedagogical principles. That means guiding students rather than providing direct answers, aligning to the curriculum, and keeping a qualified teacher in the loop. The evidence supports this approach.

The strongest evidence shows that human connection and human expertise remain essential in AI tutoring. Effective AI tutors are designed to complement human teaching. They act as a force multiplier for practice and consolidation, not a replacement for the teacher–student relationship.

No, ChatGPT is not an intelligent tutoring system, it’s a large language model. ChatGPT is designed to respond, not to teach. One RCT found that students using ChatGPT to study performed around 17% worse in exams than those who did not. A carefully designed AI tutor works very differently, using active learning strategies and high quality explanations to build genuine understanding rather than providing introductory material without retrieval practice.

AI tutoring and project based learning serve different purposes. Tutoring systems are most effective for building core knowledge – particularly in maths, where students need structured practice. The most effective approach uses AI tutoring to strengthen foundational understanding so students engage more confidently in work requiring collaboration skills and applied thinking.

References

Bastani, H., Bastani, O., Muharremoglu, A. and Sinha, A. (2024). Generative AI Can Harm Learning. The Wharton School, University of Pennsylvania.

Department for Education (2025). Key Stage 4 Performance: 2024 to 2025. GOV.UK.

Department for Education (June 2025). Generative AI in Education. GOV.UK.

Department for Education (June 2025). Generative AI: Product Safety Expectations. GOV.UK.

Eedi and Google DeepMind (November 2025). New Exploratory Research from Eedi and Google DeepMind Reveals Human-in-the-Loop AI Tutoring Outperforms Human-Only Support

Education Endowment Foundation (2024). Teaching and Learning Toolkit: One-to-one Tuition. EEF.

Kestin, G. et al. (2025). AI tutoring outperforms active learning in a randomised controlled trial. Published under creative commons licence.

Luckin, R. et al. (2025). A Case Study on High-Impact AI Maths Tutoring from Third Space Learning. Educate Ventures Research.

Oakley, B. and Sejnowski, T. (2025). The Memory Paradox: Why Our Brains Need Knowledge in an Age of AI

Vanacore, K., Baker, R.S., Closser, A.H. and Roschelle, J. (2026). “The path to conversational AI tutors: integrating tutoring best practices and targeted technologies to produce scalable AI agents.” arXiv:2602.19303v1.

The Science of Learning Podcast (2025). AI in Education with Daisy Christodoulou.

DO YOU HAVE STUDENTS WHO NEED MORE SUPPORT IN MATHS?

Skye – our AI maths tutor built by teachers – gives students personalised one-to-one lessons that address learning gaps and build confidence.

Since 2013 we’ve taught over 2 million hours of maths lessons to more than 170,000 students to help them become fluent, able mathematicians.

Explore our AI maths tutoring or find out about the AI tutor for your school.